The Agents Work. The Organization Does Not.

Microsoft studied AI adoption across 300,000 enterprise employees. They documented a pattern nobody expected: the best performers, the people you would bet on to adopt fastest, quietly abandoned AI tools after three weeks.

Not because the tools were bad. Because nobody redesigned the work around them.

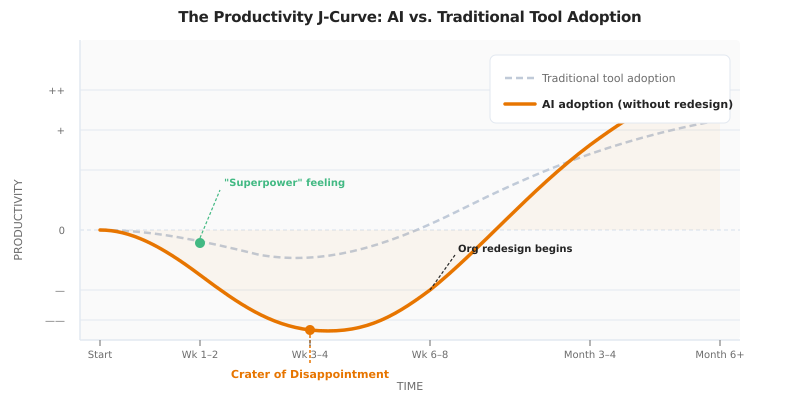

The first two weeks felt like a superpower. Code generated in minutes. Reports drafted in seconds. Then week three arrived. The gains stalled. The AI hallucinated something expensive. Nobody had a process for catching it. The tool started feeling like overhead. Adoption quietly faded.

Industry researchers call this the “crater of disappointment.” The DORA 2025 report confirmed it with hard data: teams exhibiting higher rates of AI tool usage demonstrated increased change failure rates and spent significantly more time on manual rework.

More AI usage. Worse outcomes.

The models did not fail. The organization did.

Ninety-five percent of generative AI implementations do not deliver a positive ROI. Deloitte surveyed 3,200 executives and found only 25% have moved even 40% of their AI experiments into production. The World Economic Forum talked to 20+ technology firms and heard the same thing: “AI is implemented as a bolt-on tool, sprinkled across existing tech stacks. These situations do not generate value.”

Erik Brynjolfsson, director of Stanford’s Digital Economy Lab: “The real bottleneck in the digital economy is not invention. It is diffusion.”

In my last post, I argued that specification is the new scarcity. That was half the story. Specification requires specifiers. Specifiers work inside organizations. And most organizations were never redesigned to run an agentic workforce.

The J-Curve Nobody Planned For

Every technology transition follows a productivity J-curve. Adopt a new tool. Productivity dips before it rises. This is not new.

What is new is the depth and speed of the dip with AI.

Traditional tool adoption, like migrating from on-prem TFS to Azure DevOps, created a shallow dip over a few weeks. The new platform was different, but the nature of the work stayed the same. You still wrote code. You still reviewed code. The tool changed. The job did not.

AI adoption creates a deep, structural dip because the nature of the work changes underneath you. You stop writing code and start reviewing code. You stop executing tasks and start specifying them. You stop being a developer and start being a manager of synthetic developers.

That is not a tool change. That is a role change. And role changes break people.

The DORA data shows exactly why. Developers using AI tools reported perceiving they were 24% faster. Empirical measurements showed they were actually taking 19% longer to deliver stable updates. The gap between perceived speed and actual stability is where the J-curve lives.

Reading code exhausts a different cognitive pathway than writing code. You can write for hours. Reviewing code written by someone else, catching subtle logic errors, verifying edge cases you did not author, that drains your attention faster. Multiply this by every AI-generated output across your team, and the cognitive load compounds invisibly.

McKinsey Health Institute data puts a number on this: 1 in 5 professionals currently experience severe burnout symptoms, including cognitive impairment and mental distancing, driven by the mandate to integrate probabilistic AI outputs into deterministic business processes.

The organizations that survive the J-curve are not the ones with the best models. They are the ones that redesign the work before they deploy the tools.

Deploying AI without redesigning the organization is automating a traffic jam.

You Cannot Skip Stages

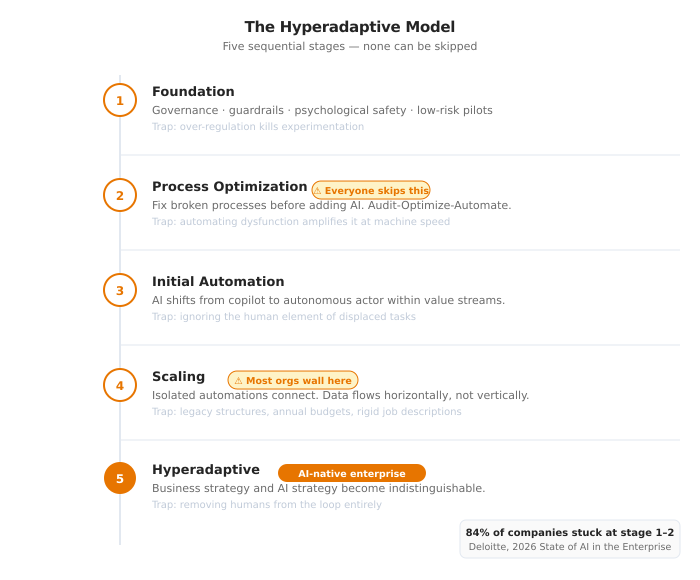

The Hyperadaptive Model, published by IT Revolution, maps the path from traditional enterprise to AI-native organization across five sequential stages. Each stage builds on the last. None can be skipped.

Stage 1: Laying the Foundation. Governance, guardrails, psychological safety. The goal is to move the workforce from apprehension to possibility. Low-risk pilots. Middle-out champions who experiment and share results. The trap: implementing regulation so heavy that it kills experimentation before it starts.

Stage 2: Process Optimization. Fix the underlying processes before you add AI. This is the stage everyone wants to skip. It is also the stage that determines whether everything after it succeeds or fails. The trap: automating a broken process, which just amplifies waste at machine speed.

Stage 3: Initial Automation. The transition from AI as copilot to AI as autonomous actor. Humans shift from executing tasks to managing agents within isolated value streams. The trap: ignoring the human element. People watch their tasks move to machines and nobody addresses what that feels like.

Stage 4: Scaling. Connecting isolated pockets of automation into a cohesive whole. Data and decisions flow horizontally across value streams instead of vertically through management chains. The trap: scaling friction. Legacy structures, annual budgets, rigid job descriptions, they fight the new fluidity. This is where most organizations hit a wall.

Stage 5: Hyperadaptive. Business strategy and AI strategy become indistinguishable. The distance between market insight and operational action collapses to near zero. The trap: removing humans from the loop entirely. Agents execute flawlessly. They do not provide ethical judgment.

Deloitte’s survey confirms most organizations are stuck early. 84% of companies have not redesigned jobs or the nature of work itself around AI capabilities. They expanded access by 50% in a single year, but fewer than 60% of workers with access use AI daily. Access grew. Activation did not.

Here is what the research makes unambiguous. Organizations that attempt to jump from Stage 1 directly to Stage 4, skipping the unglamorous work of fixing broken processes and building trust, experience what the research calls “integration crashes.” They deploy capable agents into dysfunctional workflows and get dysfunction at higher velocity.

This is the same lesson every Agile transformation taught. You cannot bolt a new methodology onto a broken culture and expect the methodology to fix the culture. The methodology exposes the culture. AI exposes it faster.

I wrote about this in Procedure Over Intelligence: Agent Skills encode organizational wisdom into executable workflows. But Skills only work when the organization has wisdom to encode. Stage 2 is where that wisdom gets created. Skip it, and your Skills encode the wrong things.

You cannot bolt an AI workforce onto a broken organization and expect the AI to fix the organization.

Automating a Broken Process Breaks It Faster

This deserves its own section because it is the single most expensive mistake teams make.

The instinct is natural. “This process is slow. Let’s automate it.” Fast, logical, and catastrophically wrong when the process itself is the problem.

A team has a three-day document review workflow. They deploy agents to process documents. The agents are fast. Documents flow through in hours instead of days. Then someone notices: the agents are making the same categorization errors the human reviewers made, just 50x faster. The errors compound. Downstream systems ingest bad data at machine speed. The cleanup costs 5x to 10x what the original manual process cost.

The agents did exactly what they were told. The instructions were wrong. They were wrong before the agents existed. The manual process had the same errors, but at human speed they were caught and corrected informally by the people doing the work. Remove the humans, keep the bad process, and the informal correction layer vanishes.

The fix is a framework the research calls Audit-Optimize-Automate.

Audit. Deconstruct the existing workflow. Find the real bottlenecks. Often, the perceived bottleneck is a symptom. The actual constraint is upstream, usually ambiguous inputs or undefined edge cases.

Optimize. Redesign the process manually. Standardize inputs. Define success criteria. Fix the logic before any AI touches it. This feels slow. It is the only step that prevents compounding errors at scale.

Automate. Only after the logic is sound and manually verified does it get handed to an agent.

The order matters. Automate first, and you scale the dysfunction. Audit first, and you scale the fix.

AI does not fix processes. It reveals which ones were broken all along.

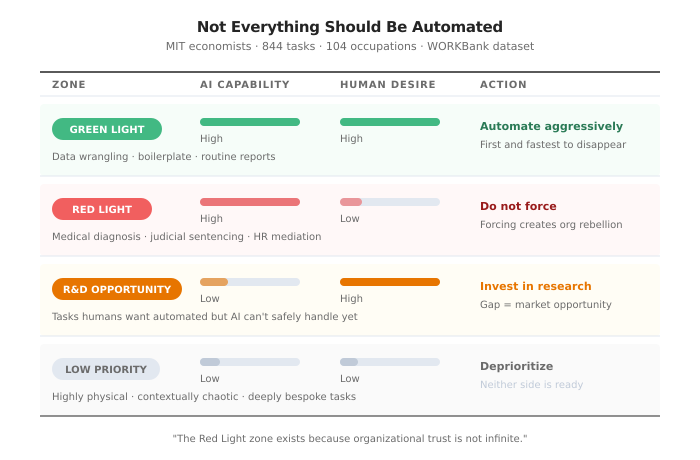

Not Everything Should Be Automated

This is the one most AI discourse gets wrong.

The assumption is that everything AI can do, AI should do. The research says otherwise.

MIT economists analyzed 844 discrete tasks across 104 occupations using the WORKBank dataset. Instead of a binary “automate or not,” they mapped every task against two dimensions: can AI do it, and do humans want it done by AI?

Four zones emerge.

Green Light Zone. High capability, high desire. Cognitive drudgery. Data wrangling, boilerplate compilation, routine report generation. Automate aggressively. These tasks disappear first and nobody misses them.

Red Light Zone. High capability, low desire. AI can technically execute these tasks, but humans resist for ethical, legal, or deeply interpersonal reasons. Medical diagnosis delivery. Judicial sentencing. Complex HR mediations. Forcing automation here creates organizational rebellion, not efficiency.

R&D Opportunity Zone. Low capability, high desire. Tasks humans desperately want to offload, but current models cannot handle safely. This is where venture capital and AI research should focus. The gap between desire and capability is the market opportunity.

Low Priority Zone. Low capability, low desire. Highly physical, contextually chaotic, or deeply bespoke tasks that neither machines can perform nor humans wish to relinquish.

The Green Light zone is where every team starts. It is also where most teams stop.

The strategic mistake is treating automation as a spectrum from “none” to “everything.” It is not a spectrum. It is a map with hard boundaries. The Red Light zone exists because organizational trust is not infinite. Forcing AI into spaces where humans have strong objections, regardless of technical capability, destroys the trust needed to adopt AI everywhere else.

Respect the zones. The Red Light tasks are not failures of technology. They are signals about organizational values.

Not everything AI can do should be done by AI. The zones are not obstacles. They are the architecture.

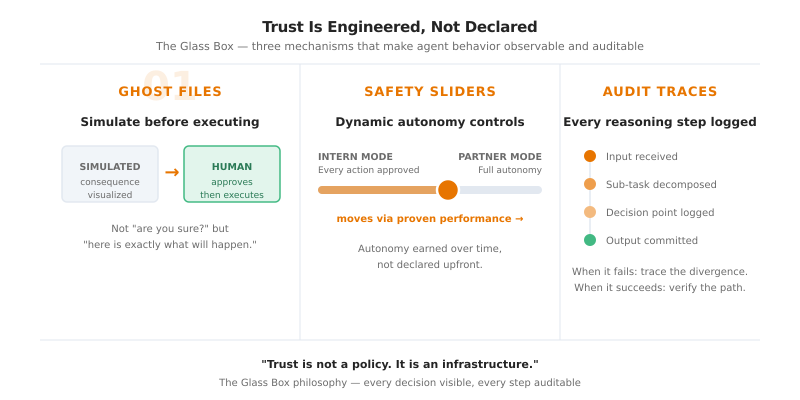

Trust Is Engineered, Not Declared

You cannot announce trust into existence. “We trust our AI systems” is a press release, not an architecture.

Trust in autonomous systems follows a pattern every engineering discipline has learned separately. Aviation, nuclear power, autonomous vehicles. Each went through the same evolution: from blind trust (“the computer knows best”) to earned trust (“the computer proved it can handle this specific scope, under these specific conditions, with this specific fallback”).

The emerging architecture for earned trust in AI systems uses what researchers call the “Glass Box” philosophy. Not a magic box where you put in a prompt and hope for the best. A glass box where every decision, every intermediate step, every assumption is visible and auditable.

Three mechanisms make this concrete.

Ghost Files. Before an agent commits to any destructive or permanent action, it generates a simulated outcome. The human inspects the exact consequences before anything executes. Not “are you sure?” but “here is precisely what will happen, visualized, before it happens.” The difference between a confirmation dialog and a simulation is the difference between ceremony and safety.

Safety Sliders. Dynamic autonomy controls. Start in “Intern Mode,” where every sub-task requires human approval. As empirical reliability is proven over time, slide toward “Partner Mode” with increasing autonomy. The slider moves based on demonstrated performance, not declared confidence.

Auditable Decision Traces. Every reasoning step logged. Not just inputs and outputs, but the intermediate chain. When the system makes a mistake, you can trace exactly which decision diverged. When it succeeds, you can verify the path was sound, not just lucky.

This connects directly to what I built in Beyond the Million-Token Window with scratchpad routing. The scratchpad created an auditable reasoning trace for document navigation. The same principle applies to organizational trust. Auditability is not overhead. It is the mechanism by which trust is earned.

The organizations that achieve Stage 4 and Stage 5 in the Hyperadaptive Model are not the ones that deployed the most powerful agents. They are the ones that made agent behavior observable enough that trust could be continuously verified.

Trust is not a policy. It is an infrastructure.

Ten Fluent People Outperform Five Hundred Trained Ones

This is the finding that should reorganize how every enterprise thinks about AI training.

A team of 10 people with deep AI fluency, people who understand task decomposition, can identify what AI should and should not do, and know how to specify work precisely, will consistently outproduce 500 employees who received standard AI training.

The gap is not about prompting skill. It is about what the research calls the “Judgment Layer.”

The 101 Level of AI fluency is where most organizations stop. Employees learn basic chat interactions. They copy-paste outputs. They treat AI as a faster version of Google. When the AI hallucinates or produces low-quality output, they have no framework for diagnosing why or fixing the interaction. They hit the crater of disappointment and quit.

The 201 Level is where value lives. At this level, people can:

- Separate sub-tasks that require human judgment from sub-tasks suitable for AI execution

- Iterate on AI output instead of accepting or rejecting it wholesale

- Recognize the symptoms of context pollution and organizational drift before they compound

- Specify work precisely enough that agents execute reliably without hand-holding

This is not a training problem. It is a systems design problem. The same distinction I drew in The Bottleneck Moved: “The teams that made the transition are not better at prompting. They are better at specifying. That is a systems skill, not a personal one.”

The organizational implication is uncomfortable but clear. Broad, shallow AI training produces the worst possible outcome: an entire workforce that uses AI poorly, generates confident-looking garbage, and creates a perception that AI “doesn’t work” for this organization. Meanwhile, a small team with genuine fluency rewires the same workflows and delivers 10x the output.

Deloitte’s survey names insufficient worker skills as the single biggest barrier to AI integration. Yet most companies respond by educating broadly rather than redesigning deeply. 53% raise general AI fluency. Far fewer rearchitect roles, workflows, or career paths. One logistics director captured the difference: the goal is not teaching people to use AI tools. It is turning “today’s pricing analysts into pricing strategists.” That is the jump from 101 to 201.

The counterintuitive move: invest deeply in a small cohort rather than broadly in everyone. Let the cohort redesign the workflows. Then train the rest of the organization on the redesigned workflows, not on AI tools in the abstract.

Mass training produces mass mediocrity. Deep fluency produces leverage.

The Window Is Shorter Than You Think

Everything above becomes urgent when you look at what is coming.

Gartner predicts that by 2028, 90% of all B2B purchases will be initiated, evaluated, negotiated, or completed autonomously by AI agents. That is $15 trillion in global corporate spending shifting into machine-to-machine commerce.

When purchasing agents evaluate your organization, they will not read your marketing collateral. They will query your APIs. They will audit your data layers for consistency. They will benchmark your system performance against competitors in real time. If your infrastructure is not optimized for agentic evaluation, you are invisible to the primary buyers in the marketplace. Not uncompetitive. Invisible.

Simultaneously, the SaaS model is disintegrating. The research calls it the “Road Runner Economy,” after the cartoon coyote who runs off a cliff and keeps sprinting in midair. Giant portions of the commercial software industry are suspended over a structural abyss. The math is brutal. Enterprise CRM: $150-300 per user per month. AI-generated custom alternative: $50-100 per month for the entire organization. Not per user. Total.

Within 24 to 36 months, “off-the-shelf” software will become synonymous with compromise. “Generated-for-you” will be the baseline expectation. Deloitte found that 36% of surveyed companies expect at least 10% of their jobs to be fully automated within a year. Within three years, that number jumps to 82%. The organizations that cannot generate custom software at this speed, because their processes are broken and their people lack fluency, will pay premium prices for generic tools while their competitors run custom solutions at a fraction of the cost.

And the pace is accelerating. Anthropic’s engineers have largely stopped writing code manually. They manage AI agents that write the architectural code for the next generation of models. OpenAI slowed hiring because a single high-leverage engineer with agent orchestration completes in 20 minutes what used to take weeks. The self-acceleration loop is real, and it is compressing the timeline for organizational adaptation.

Four frontier models shipped in fourteen days in February 2026. Every release cycle raises the ceiling on what agents can execute. The gap between organizations that can manage an AI workforce and those that cannot is widening with every release.

The window to build this infrastructure is not closing dramatically. It is getting shorter with every model release.

It Was Never About the Technology

Every post in this series has circled the same insight from a different angle. Systematic workflows beat raw capability. Intelligent architecture beats brute-force context. Specification is the new scarcity.

This post is the layer underneath.

None of it works if the organization itself is not redesigned. Perfect Agent Skills, elegant hierarchical navigation, rigorous holdout validation, precise intent engineering. If the humans using these systems are stuck at the 101 level, if the processes were never audited, if trust was declared instead of engineered, if the stages were skipped, the tools produce expensive failure at unprecedented speed.

The technology was never the hard part. Every previous technology wave, from mainframes to cloud to mobile, the winners were not the early adopters. They were the ones who redesigned the organization to absorb the technology.

AI is no different. The stakes are higher. The window is shorter.

80% of enterprise AI initiatives fail. Not because the models are weak. Because the organization was never rebuilt.

The agents work. The organization does not.

Build the organization. The agents are waiting.

Resources

- McKinsey Superagency Report: mckinsey.com

- DORA 2025 Report: DevOps Research and Assessment Annual Report

- Hyperadaptive Model: itrevolution.com

- WORKBank Automation Research: arxiv.org/abs/2506.06576

- McKinsey Burnout Research: mckinsey.org

- Gartner 2026 Strategic Technology Trends: gartner.com

- The Road Runner Economy: nraford7.github.io

- Deloitte State of AI in the Enterprise 2026: deloitte.com/us/state-of-ai

- WEF AI at Work: From Productivity Hacks to Organizational Transformation: weforum.org

- Stanford/ADP “Canaries in the Coal Mine”: Brynjolfsson et al., Stanford Digital Economy Lab

- Related posts: Procedure Over Intelligence · Beyond the Million-Token Window · The Bottleneck Moved